LLM-Ready Websites: How to Structure Content So AI Recommends You

The new referral layer

How people find answers online has shifted. A growing share of queries that used to trigger a Google search now happen inside ChatGPT, Perplexity, Claude, or Google's own AI Overviews. The user never sees ten blue links. They see a summary with a handful of citations, and maybe they click one.

If your content is not in those citations, you are absent from the conversation. Generative Engine Optimisation (GEO) is the emerging discipline of making sure it is. Much of it overlaps with solid SEO fundamentals. Some of it does not, and ignoring the differences costs you visibility.

How LLMs actually source answers

Each platform sources differently, which matters for your strategy.

ChatGPT with browsing and Perplexity run live web searches against their own indexes and Bing, retrieve a handful of pages, and synthesise an answer with inline citations. They favour content that is easy to parse: clear headings, direct answers to common questions, and pages that load quickly without a wall of scripts.

Google AI Overviews uses Google's existing index and surfaces answers at the top of the SERP. Schema markup, E-E-A-T signals, and the usual SEO hygiene matter here. If you already rank well organically, you are most of the way there.

Claude, ChatGPT without browsing, and open-source models rely on training data with cutoffs. Being cited by these depends on your content existing widely on the open web before the training run, being referenced by other authoritative sources, and being structured cleanly enough for the scrape to preserve meaning.

The pattern is consistent. AI citations flow toward content that is clearly structured, factually dense, and written in a form that survives extraction. Generic marketing prose does not.

What makes content extractable

Direct answers near the top

LLMs reward pages that answer the question in the first 100-200 words and then expand. The inverted-pyramid style from journalism is the LLM-friendly format. A product page that buries the price under three scrollers of hero video will not get cited. A page that says "Our commercial cleaning starts at $180 AUD per hour in Melbourne CBD" in the opening paragraph will.

Question-and-answer structure

Dedicated Q&A sections, FAQ blocks, and H2s phrased as questions all get extracted cleanly. That is why "How much does X cost?" and "What is the difference between X and Y?" style headings outperform clever marketing headings in LLM visibility.

Short, self-contained paragraphs

When an LLM retrieves a chunk of your page, it pulls 200-500 tokens at a time. If a single idea is spread across three paragraphs with a sidebar break, only one paragraph ends up in the context window and the rest is lost. Self-contained paragraphs where each one is a complete thought survive chunking.

Concrete numbers and specifics

Models prefer content with real figures, dates, and named entities over vague claims. "30-60 per cent deflection rate" beats "significant improvement". "Available across Melbourne, Sydney, and Brisbane" beats "nationwide coverage".

Schema markup that actually helps

Schema.org structured data is no longer optional for GEO. Three types matter most.

Article and BlogPosting with headline, author, datePublished, and dateModified give AI crawlers the basics they need to attribute and date your content. Outdated posts lose citations fast because AI systems prefer recent sources.

FAQPage is the highest-leverage schema for LLM citation. Every question becomes a structured Q&A pair that is trivial to extract. Use it honestly. Only real questions with real answers, not stuffing.

Organization and LocalBusiness with address, service area, and sameAs links to your authoritative profiles establish you as a real entity. For AU-specific queries like "web agency in Melbourne", this combined with a consistent NAP (name, address, phone) across the web does most of the work.

Schema is only useful if the visible content matches. Mismatched schema is worse than none.

Topical authority still matters

An LLM is more likely to cite a site that shows depth on a topic than one with a single shallow post. If you want to be the cited source on, say, "headless CMS for Australian retailers", you need a cluster of content: a pillar page, supporting posts on platform comparisons, implementation gotchas, and real case studies, internally linked and consistent in terminology.

The overlap with traditional SEO is strongest here. What changes is that the surface you are optimising for is a synthesised answer, not a ranked list. Breadth of topical coverage matters more than hitting any single keyword.

Tactical checklist

A few things we put into every site build where GEO matters.

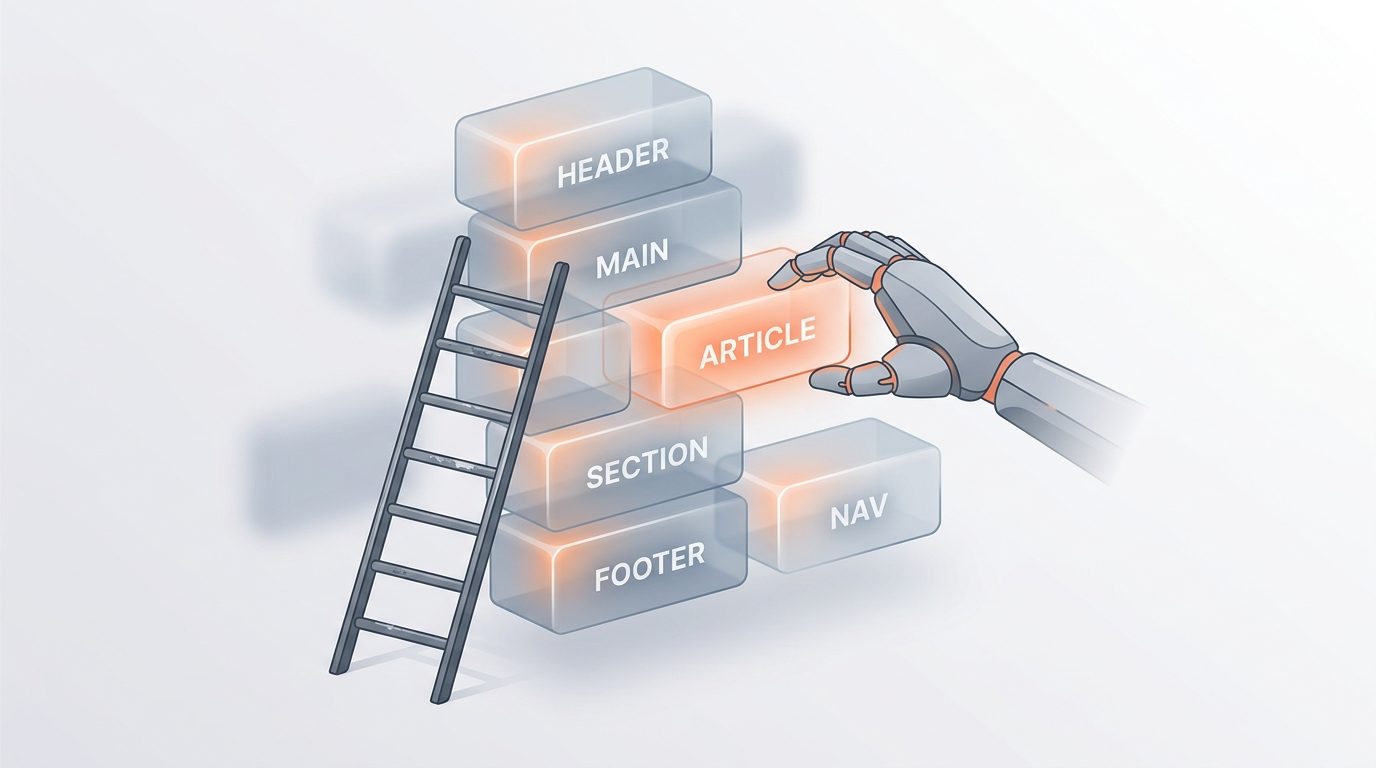

- Clean HTML semantics. H1-H3 hierarchy that matches content structure. No div-soup replacing real headings.

- Server-side rendered content. If an AI crawler sees an empty shell because your content loads via client-side JS, you are invisible. SSR or SSG for any content you want cited.

- Fast TTFB and low JavaScript weight. AI crawlers give up faster than Googlebot. Pages over a few seconds to first byte drop out of the consideration set.

- Canonical URLs and consistent URLs. LLMs deduplicate aggressively. Ten versions of the same page fragments your authority across all of them.

- A proper

/llms.txtfile. The emerging convention for telling AI systems what content is canonical, how to cite you, and which parts of the site to prioritise. Cheap to add, worth doing. - Date hygiene. Visibly dated content, updated when it changes. Stale content is demoted hard.

What to measure

Traditional rank tracking does not capture AI visibility. You need new instruments.

Tools like Profound, AthenaHQ, and Peec.ai monitor how often your brand and URLs appear in LLM responses for a defined set of queries. At a minimum, run a weekly manual check. Take 20 queries that matter to your business, run them in ChatGPT, Perplexity, and Google AI Overviews, and record whether you are cited, whether the citation is accurate, and which competitors appear.

Server logs are the other underused source. User agents like GPTBot, PerplexityBot, ClaudeBot, and Google-Extended show you what AI crawlers are actually fetching. If your key pages are not being crawled, nothing else matters.

The short version

Write for humans first, structure for machines second, and never lie to either. Clear headings, direct answers, real numbers, clean HTML, and honest schema. Most of what works for LLMs also works for Google and for readers, which is a pleasant change from past SEO cycles.

If you want a review of how LLM-ready your site actually is, with a specific plan for the gaps, we are happy to run one.